Enterprise AI adoption is accelerating rapidly, but the path from pilot to scale remains uneven. In McKinsey’s 2025 global survey, 88% of organizations reported using AI in at least one business function, yet only 7% said AI had been fully scaled across the organization. A separate McKinsey analysis also found that nearly two-thirds of organizations are still in the experimentation or piloting phase, even as AI use becomes widespread. This widening gap between adoption and scale reveals something important: the challenge is no longer simply getting AI into the enterprise. It is helping the enterprise absorb it.

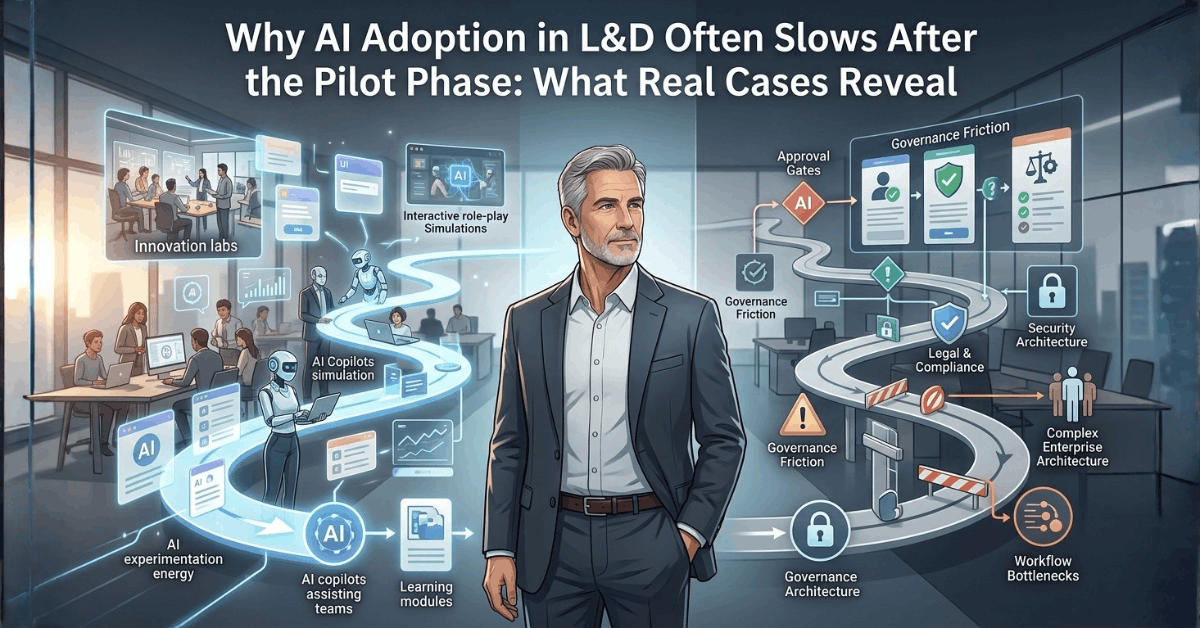

What begins as a promising AI pilot often runs into a familiar set of enterprise realities: security concerns, legal and compliance reviews, data governance uncertainty, and unclear ownership. In several organizations, these factors slow progress not because the use case lacks potential, but because the surrounding system has not yet built the structures required to trust, manage, and scale it.

Drawing on patterns observed across large organizations adopting AI in workplace learning, this article explores why AI pilots often lose momentum at precisely the point where they should accelerate. It argues that the issue is not governance as a constraint, but governance as an underdeveloped organizational capability that has not yet caught up with the speed of AI experimentation.

This article draws on emerging findings from a joint research initiative by CommLab India and researchers at Lancaster University exploring how artificial intelligence is shaping workplace learning across large organizations. The patterns discussed here are informed by anonymized interviews with enterprise learning leaders across multiple industries, offering insight into why promising AI pilots often struggle to move beyond early experimentation.

AI Pilots Rarely Stall for the Reason People First Assume

When AI pilots lose momentum inside large organizations, the default explanation is often that the technology did not deliver enough value. Sometimes that is true. But in many enterprise settings, pilots do not slow down because they are uninteresting, ineffective, or poorly conceived. They stall because they collide with the organizational systems that determine what can be used, where, by whom, and under what conditions.

That collision usually happens after the initial excitement.

A learning team experiments with a promising AI tool. Early results are encouraging. Content development becomes faster. Simulations become more realistic. Drafting becomes easier. Small wins begin to accumulate, and the pilot starts to feel like the beginning of something larger.

Then a different set of questions begins to surface.

Can confidential information be entered into the system?

Where is the data going?

Who owns the output?

Can it be used in regulated environments?

What happens if the model produces something inaccurate or non-compliant?

Who is ultimately accountable?

At that point, what looked like a straightforward productivity opportunity becomes something more complex: a governance challenge.

This is where many AI pilots begin to slow down. And in large organizations, that slowdown is rarely accidental. It is structural.

The Pattern Across Large Organizations: AI Moves Faster Than Governance

One of the clearest patterns across the organizations studied was that AI adoption usually began at the edge of the system rather than at its center.

A learning team tested a role-play tool.

A designer experimented with a generative co-pilot.

A business unit explored AI-generated simulations.

A team used AI informally to speed up content development.

In the early stages, this experimentation often happened quickly because it was small, local, and outcome-driven. The value was visible, the risk felt containable, and the barriers were not yet fully activated.

But once teams tried to move from experimentation to broader adoption, they encountered a very different organizational reality.

AI capabilities had moved ahead. Governance frameworks had not. That created a familiar enterprise tension: The organization wanted the benefits of AI, but had not yet built the structures required to trust it.

This is not unique to learning and development. It is increasingly visible across enterprise AI adoption more broadly. McKinsey’s recent research shows that while AI use is broadening quickly, most organizations are still in the early stages of scaling and capturing enterprise-level value.

This is why many AI pilots do not fail during experimentation itself. They stall in the transition between local experimentation and enterprise legitimacy.

Why AI Pilots Encounter Friction So Quickly

At first glance, this friction can look like resistance to innovation. But in most large organizations, that interpretation is too simplistic.

What is often happening is that AI is surfacing unresolved questions the organization has not yet answered structurally. Questions about data, risk, accountability, ownership, review, and approval were always present in some form. AI simply makes them harder to ignore because it introduces new uncertainty into already complex systems.

Across the seven interview cases, four barriers appeared repeatedly:

Security and data protection concerns

Teams were often unsure what information could safely be used in AI tools and what risks might be introduced by experimentation.Legal and compliance approval cycles

Promising use cases often slowed once they entered formal review and sign-off pathways.The need for private or enterprise-controlled AI environments

Organizations became more open to AI only when it could be used inside approved, governed systems.The rise of shadow AI when official access was blocked or delayed

Employees often continued using AI informally even when sanctioned pathways were unavailable.

These barriers did not necessarily mean organizations were anti-AI. In many cases, they were highly interested in AI and actively exploring it. The problem was that interest alone was not enough to overcome institutional uncertainty.

Why AI Pilots Slow Down in Large Organizations

What teams want to do | What the organization needs to know first |

|---|---|

Experiment with AI tools quickly | Whether data, privacy, and IP risks are acceptable |

Use AI to accelerate workflow | Whether outputs can be trusted and governed |

Scale promising use cases | Whether there is a secure, approved environment |

Move from pilot to production | Whether ownership, review, and accountability are clear |

Enable teams to innovate | Whether controls exist to prevent unmanaged risk |

1. Security and Compliance Concerns Often Arrive Before Scale Does

One of the most immediate reasons AI pilots slow down is that they trigger concerns about information security and regulatory exposure.

This was especially visible in organizations working with:

proprietary internal knowledge

regulated communication

customer-facing simulations

sensitive employee or business data

industry-specific compliance requirements

In several cases, teams found that tools which seemed perfectly useful at a local level were quickly flagged once broader questions emerged about where data was stored, whether prompts were retained, whether outputs could expose confidential information, and whether usage might create downstream compliance risk.

These are not abstract concerns. They are rational enterprise concerns.

Public AI tools and externally hosted systems can introduce real risk around confidentiality, data leakage, and governance gaps when sensitive content is entered into unmanaged environments. That is one reason many organizations initially respond to AI experimentation by restricting access rather than expanding it.

From the perspective of the learning team, this can feel frustrating and unnecessarily cautious. From the perspective of security and compliance, it often feels prudent and overdue.

The real problem is not that one side is right and the other is wrong. It is that the organization often lacks a shared mechanism for deciding how to experiment safely.

That is where pilots begin to stall.

Why security concerns become a scaling issue so quickly

Pilots often begin informally

What feels low-risk to a small team may look very different once viewed through enterprise controls.AI tools are often easier to access than to govern

Employees can test tools quickly, but organizations need time to understand the implications.The same tool may be low-risk in one context and high-risk in another

A harmless drafting use case can become far more sensitive when internal knowledge or regulated scenarios are involved.

This is why security questions often arrive before scale does. The organization is not only evaluating the tool. It is evaluating the consequences of normalized use.

2. Legal and Approval Cycles Are Often Built for Slower Technologies

Another recurring barrier across the interviews was the speed mismatch between AI experimentation and enterprise approval processes.

AI tools evolve quickly. Enterprise review processes do not.

In several organizations, promising AI use cases were not rejected outright. Instead, they were slowed by the accumulation of review steps involving:

procurement

legal

privacy

compliance

IT security

data governance

sometimes regional or business-unit-specific sign-off

This created a very familiar dynamic. By the time a pilot was reviewed, the tool had already changed. Or the use case had evolved. Or employees had already found another way to do the work.

This is one of the most under-discussed reasons AI adoption stalls in large organizations: the organization is not built to evaluate fast-moving tools at the speed they emerge. That does not mean review should be bypassed. It means review must be redesigned.

When governance appears only as a gate at the end of experimentation, it almost guarantees friction. But when governance is involved earlier, more proportionately, and with clearer risk categories, it becomes much easier to distinguish low-risk experimentation from high-risk deployment.

That is where many organizations are still maturing.

Why approval cycles create hidden drag

Traditional review models assume slower technology lifecycles

AI tools evolve too quickly for static approval models to remain effective.Cross-functional review often lacks a shared AI vocabulary

Different functions may evaluate the same pilot through completely different lenses.Unclear ownership leads to delayed decisions

When no one clearly owns the risk, everyone becomes cautious.

This is not simply a process issue. It is a capability issue inside the organization.

3. Private AI Environments Often Become the Turning Point

One of the clearest patterns across the cases was that organizations often moved from restriction to progress only when secure or enterprise-controlled AI environments became available.

In the early stages, many teams were blocked from using public-facing tools because of concerns related to:

IP protection

confidential data exposure

prompt retention

external model training

unclear terms of use

But once secure enterprise environments were introduced, often through private copilots, internal instances, or approved enterprise platforms, the conversation changed significantly.

This became a turning point in several cases. What had previously been framed as: “AI is too risky to use.” began to shift toward: “AI may be usable if it stays inside a controlled environment.” That distinction matters enormously.

Because in many large organizations, the real issue is not whether AI can be useful. It is whether AI can be used in a way that fits the organization’s trust architecture.

Private or enterprise-controlled AI environments often help create that fit by allowing organizations to:

restrict data exposure

control access and permissions

monitor usage

define acceptable inputs

create clearer review pathways

reduce the need for blanket prohibition

This is increasingly how large enterprises are trying to balance innovation with control: not by eliminating experimentation, but by bringing it into environments they can govern more effectively. Organizations that scale AI more successfully tend to pair experimentation with stronger internal controls, clearer governance ownership, and better infrastructure for safe use.

In practice, this often marks the difference between AI as a blocked experiment and AI as a legitimate enterprise capability.

4. When Official Access Is Slow, Shadow AI Fills the Gap

One of the most revealing patterns across enterprise AI adoption is that blocking AI rarely stops AI use altogether. It simply changes where it happens.

When official tools were restricted, delayed, or difficult to access, several organizations observed a quieter but important phenomenon: employees began using AI informally anyway.

They used public tools to:

summarize content

draft messages

generate ideas

rewrite text

support productivity tasks

speed up work outside approved systems

This is often referred to as shadow AI: the use of AI tools without formal approval or oversight.

Shadow AI is usually not driven by malicious intent. More often, it emerges because employees are trying to solve real productivity problems faster than the organization is able to respond. But that is precisely what makes it risky.

When AI use happens outside approved systems, organizations lose visibility into:

what data is being entered

which tools are being used

whether outputs are being relied on in sensitive contexts

how broadly AI is already shaping work behind the scenes

That creates a paradox many organizations are now confronting: The more tightly AI is blocked, the more likely it is to reappear informally. That is why governance cannot rely on prohibition alone. If employees see real value in AI, demand will not disappear. It will route around the system.

This is not only a security issue. It is also a design issue. When organizations fail to provide sanctioned, usable pathways for experimentation, they often create the conditions for unmanaged adoption instead.

The Governance Paradox in Enterprise AI

Governance approach | Likely outcome |

|---|---|

No guardrails | Fast experimentation, high unmanaged risk |

Blanket restriction | Slower official adoption, higher shadow AI risk |

Slow approvals with unclear ownership | Pilot fatigue and stalled momentum |

Secure, usable enterprise environments | Higher trust, safer experimentation, clearer path to scale |

The Deeper Problem: Governance Is Often Treated as a Barrier, Not a Capability

Perhaps the most important insight from the interviews is that governance is often discussed in the wrong way. It is commonly treated as the thing that slows AI down. But that framing misses the deeper issue.

The real problem is not that governance exists. It is that governance is often underdeveloped relative to the pace and nature of AI adoption.

Large organizations are now being asked to govern technologies that are:

rapidly evolving

easy to access

difficult to classify neatly

capable of touching multiple systems at once

already being used before formal structures are in place

That requires a different kind of governance than many enterprises currently have. Not heavier governance. Better governance.

That means governance that can:

distinguish experimentation from deployment

classify use cases by risk

involve legal and security early without slowing everything equally

create approved environments for safe testing

make accountability visible

support innovation instead of only policing it

This is not a back-office concern. It is becoming a strategic organizational capability. And until that capability matures, many AI pilots will continue to stall at exactly the point where they should be scaling.

Most AI Pilots Do Not Stall Because the Technology Fails

In large organizations, AI pilots often stall not because the tools are unhelpful, but because the organization has not yet built the governance capacity needed to absorb them confidently. That is a very different diagnosis.

Across the organizations studied, the same pattern appeared repeatedly:

AI experimentation moved quickly.

Governance moved slowly.

The gap between the two became the real barrier.

What these cases make visible is that the real challenge is not simply whether AI can be useful in L&D. In many instances, that has already been established.

The harder question is what organizations need to put in place once that usefulness becomes visible. Because if AI is to move beyond scattered experimentation and become part of how learning work is actually done, then the next challenge is no longer about pilots. It is about pathways.

That is the question the next article in this series takes up: what large organizations need to do differently to move AI in L&D beyond the pilot phase and toward meaningful scale.

—RK Prasad (@RKPrasad)